Paper Explainer: Precision Corrections to Fine Tuning in SUSY

/This paper is part of a pair with the paper I wrote about here. We were interested in determining how constrained a particular theoretical extension of the Standard Model, supersymmetry (or "SUSY") is by the present experimental results (as of this last summer's ICHEP meeting). The previous paper was the "phenomenology" paper: we took the experimental results, reinterpreted them in the context of a number of interesting models, and calculated the amount of "tuning" that would be present in each model.

The paper we just put out is more of the "theory" paper, the paper that outlines how we did the tuning calculations we used in the phenomenology paper. The results are somewhat technical, so I will spend a bit more time describing the problem in general, and then talk in broad terms about what this paper adds to the discussion. So first I should describe a bit what we mean by "tuning," and why theoretical physicists care so much about it.

The question of "fine tuning" gets back to one of the big theoretical problems that is present in the Standard Model of particle physics: the problem of the Higgs boson mass. I wrote a bit about this in my series of articles for the Boston Review (in particular, here), but let's get specific.

The LHC has discovered the Higgs boson, and measured its mass: about 125 GeV. Even before we discovered the Higgs, we knew its mass was somewhere in the energy range of about 1 GeV up to about 2000 GeV. This is comparitively low compared to all possible energies that physics could operate at, and so presented a problem.

In our theories about how particles work, there are a lot of contributions what we measure as the mass of a particle. There's the "bare mass" of the Higgs, the mass that the Higgs would have if it was the only quantum field around, and then there are a bunch of correction mass terms that come from the interaction of the Higgs with other quantum fields. The sum of all these terms gives the physical mass of the Higgs, the thing we measure to be 125 GeV.

And here's where the problem comes in: the Higgs boson is a scalar (that is, it has zero spin). Scalar quantum fields get corrections terms to their masses which are sensitive to physics at arbitrarily high energies. So if the "bare" mass of the Higgs is $\mu$, and there's some quantum field that interacts with the Higgs that has a characteristic energy scale of $\Lambda$, then the physical mass of the Higgs would be something like \begin{equation} m_h^2 = \mu^2 + c \Lambda^2. \end{equation}

Here $c$ is some number that depends on the details of the quantum fields, and how it interacts with the Higgs. Typically, these are numbers that are around $1/100$, and the overall sign can be negative, so the bare mass and the corrections can cancel. The problem is that this schematic equation is true no matter how large $\Lambda$ is: it could be on the order of the Planck scale ($10^{19}$ GeV), and it would still contribute in this way to the Higgs mass.

Thus, for the measured Higgs mass to be the 125 GeV we find, either there can be no new physics at any high energy scale that interacts with the Higgs (which is possible, but there are problems that require new physics), or the bare mass and the corrections need to be exquisitely balanced so that their sum (or difference) is small. That is, the corrections need to be fine tuned.

The higher the scale $\Lambda$, the more finely tuned the corrections need to be: you might have to make the bare mass and the correction $c\Lambda^2$ the same to 2 digits, or 10 digits, or 30 digits, and in those cases, we'd start to suspect that there's some conspiracy going on. That is, the bare mass and the corrections aren't random numbers that just happen to cancel, but really there's some deeper reason that allows them to be so nearly identical.

To see why this is a unique problem for the Higgs, let me show you what the form of the corrections would be for the electron mass. Instead of corrections that go directly like the high energy scale $\Lambda$, the corrections go like the log of $\Lambda$: \begin{equation} m_e = m+c\log\Lambda \end{equation} The amazing thing about logarithms is that they take very big numbers and make them very small very fast. Even if the scale $\Lambda$ was $10^{19}$ GeV, it would give only a 10% correction to the electron mass (which is $5.11 \times 10^{-4}$ GeV). So you don't worry about fine tuning for the electron, or any spin-1/2 fermion, since you don't have to carefully balance the bare masses and the quantum corrections. Any correction is made small, due to the fact that fermions get log-corrections. The Higgs, as a scalar, is not so protected.

This problem has been long-known, and has many solutions. The most popular is supersymmetry. Supersymmetry introduces a new set of quantum fields, one for each field that we know exists (and any additional new fields we have not yet discovered would also, by assumption, also come with a pair). These fields are carefully designed so that they contribute equal and opposite corrections to the Higgs mass: \begin{equation} m_h^2 = \mu^2 + c\Lambda^2 -c\Lambda^2. \end{equation} So everything cancels and the physical mass is just the bare mass, and there's no need to tune anything.

Of course, you might say "well, you picked the two corrections to be equal and opposite, so that's still tuning." However, the model of supersymmetry gives a reason for these two corrections to be exactly equal and opposite (by setting the spin-statistics of a field and its superpartner to be opposite). We physicists get uncomfortable with tuning; we're much happier if there's a dynamical reason that things are the same. Supersymmetry provides such a reason.

However, supersymmetry cannot be a perfect theory: there are no superpartners of electrons with the same mass of the electron, after all. The symmetry is "broken" at some scale $\Lambda_{SUSY}$ and the superpartners must be heavier than the particles we know and love. OK, that's fine, but it implies that the Higgs mass must get a correction that goes like \begin{equation} m_h^2 = \mu^2 + c\Lambda_{SUSY}^2. \end{equation} Since Higgs mass is 125 GeV, this implies that $\Lambda_{SUSY}$ shouldn't be that much higher, if there is not to be too much tuning again.

The problem now is that we haven't found the new superpartners that should be doing this job of canceling the corrections to the Higgs mass. This is pushing $\Lambda_{SUSY}$ higher and higher, and reintroducing tuning to supersymmetry. There will always be less tuning in supersymmetry than a non-supersymmetric model, but if our theory cannot survive without two numbers having to cancel to one part in 100, or 1000, are we still happy with that? Many theoretical physicists would say "no."

Again, this problem has been recognized for a while, and there are many excellent papers looking specifically about how much tuning can be allowed given the non-observation of superparticles.

In particular, we tend to be interested in the lack of discovery (and thus implied large mass) of three particles in supersymmetry:

- The partner of the Higgs, the higgsino. This has the same bare mass term as the Higgs itself ($\mu$), and so it should be relatively light if the Higgs itself is not tuned. However, Higgsinos are very hard to produce directly at the LHC, so the bounds aren't that stringent (yet).

- The partner of the top quark, the stop squark. The top is the heaviest fermion, so it couples the most to the field that "gives mass," the Higgs. So its partner also couples strongly, and the heavier the stop is, the more tuned the Higgs must be.

- The partner of the gluon, the gluino. This doesn't directly contribute to the Higgs mass, but it does affect the stop mass, again due to these quantum corrections. Since the Higgs is light, and we want the stop to be light, the gluino must also be somewhat light.

So if you say that your model should not be tuned to more than, say 1 part in 10, you can place upper limits on the possible masses of the higgsino, stop, and gluino, and then ask "experimentally, does the LHC rule out this region?" If so, your theory cannot be less than 10% tuned (or 1% or 0.1%, or whatever your benchmark value might be). Our previous paper addressed the question "what models of supersymmetry can still be 10% tuned or less?" and we found some interesting directions there.

Again, all of this has been long recognized, so what's our value-added in this paper? What we did here was recognize that the way we theorists calculate tuning is somewhat inadequate. There are inherient ambiguities in this calculation, but those aren't really the problem. The problem is that the parameters we usually take into consideration when calculating tuning are parameters that are defined at very high energies -- they are fundamental parameters of the supersymmetric model, parameters like "the mass of the stop." However, these are not the same as the parameters we can measure at the LHC: the "mass of the stop" at high energies gets corrections (quantum corrections, similar to the ones that cause tuning problems for the Higgs) as you move to low energies, and that makes the "mass of the stop" that the LHC sets limits on different from the mass of the stop that enters into the tuning calculation. Our paper systematically takes into account these differences.

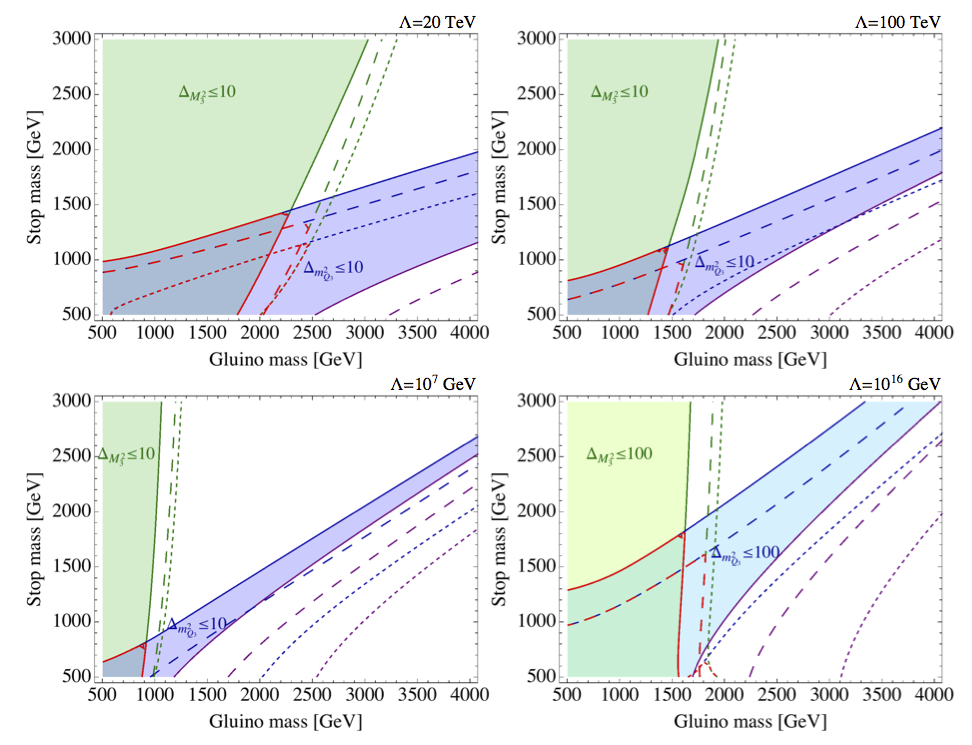

Example of the "tuning wedge" that our improved calculation results in.

I'm not going to go through each of the corrections we applied to move from the high-energy parameters to the well-defined low energy masses we can search for at the LHC, but I do want to point out some of the interesting consequences. First, we find that the tuning from the stop gets tied in to the tuning from the gluino, and vice versa. Usually these are considered separate, so in a plot of stop mass versus gluino mass, a region of defined by a particular value of tuning is a rectangle. Instead, we find that light stops require light gluinos, and vice versa. So our regions of tuning look like wedges.

Ratio of the low-energy mass to the high-scale mass for the gluino (blue) and stop (red), as a function of the supersymmetry breaking scale. The dotted red lines demonstrate the reduction of the stop mass as the other squarks are made heavier.

Second, usually we can ignore the contributions of the other squarks in the tuning calculation. This means you can imagine all the other squarks are much heavier than the stop and your theory is still not tuned (at least, in this formal definition of tuning). However, when we work to move from the high energy mass parameters to the physical masses, we discover that the other squarks push the stop mass down. If they are too heavy, the stop mass squared becomes negative, which is a bit of a problem. So this forces the other squarks to not be too heavy. Again, interesting.

Finally, to reiterate a point shows up in the standard calculation (so isn't unique to our work) we are "running" a mass down from high energies (the scale at which supersymmetry gets broken, which we call $\Lambda$ in our paper) to the energies of the LHC. The higher $\Lambda$ is, the more "running" of these parameters occurs. When this happens, you tend to find that, for a given amount of tuning (which we call $\Delta$, with 10% tuning corresponding to $\Delta = 10$), higher $\Lambda$ implies lower stop and gluino masses at the LHC.

That is, if you want your model to not be experimentally ruled out, you need either more tuning or heavier particles. Thus, if you say that you think supersymmetry isn't that tuned, the scale of supersymmetry breaking needs now to be very low, maybe only 20000 GeV. This isn't accessible at the LHC, but it presents interesting theory directions.

So what we are saying in this paper is that tuning is a tricky topic: how much tuning is "too much" is a matter of opinion. However, if you are going to do a tuning calculation, you should do it right, and we do just that. When we do, we find that the regions of a fixed amount of tuning change significantly: either both the stop and gluino should be light, or both should be heavy, but not one light and one heavy. Further, the other squarks can't be pushed off to high masses. Finally, the viable models of low tuning appear to imply very low supersymmetry breaking scales. All of this is at least a starting point if you want to think about new ways build a non-tuned model of supersymmetry that works with the current LHC data.

Tuning bounds for different values of the supersymmetry breaking scale, as a function of gluino and stop mass. Red outlined region is the "wedge" where both stop and gluino contributions to the higgs mass are below the indicated amount of tuning.